Brain Controlled Robotics

This is an overview of Myelin — a brain-controlled interface for navigating robots . I built it for my undergrad senior year engineering project back in 2010. Here's a video of it in action:

What you're seeing in the video is a driver navigating the robot through an improvised maze. In front of the driver is a screen which has four different flashing patterns. Each of these patterns correspond to a navigation command — drive straight, turn left, turn right, and stop. In the center of the screen is a video feed from a webcam mounted on top of the robot.

To send a command to the robot, the driver looks at the corresponding pattern on the screen. The cap that he's wearing has electrodes attached to it which measure his neural activity. More specifically, we're interested in the signals generated by his occipital lobe, which is the visual processing center of the human brain.

These signals are then analyzed by Myelin's classification engine, which uses digital signal processing and machine learning algorithms to figure out the pattern the driver is currently looking at. Once it figures this out, the command is relayed wirelessly to the robot.

The art and science of mind reading

Myelin relies on a neural phenomenon known as Steady State Visually Evoked Potential (SSVEP). The gist of it is this: when you observe a visual stimuli that's flashing at a fixed frequency (like the checkerboard patterns in the video), your visual cortex generates activity at that same frequency, or one of its harmonics.

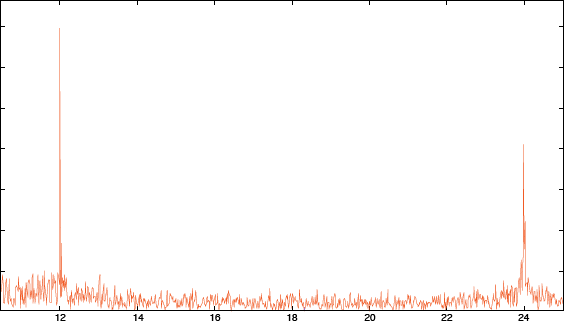

The frequency spectrum below was generated from EEG data collected over the course of a minute while the subject looked at a checkerboard pattern flashing at 6 Hz. You can see clearly see the peaks manifest at the second and fourth harmonics — 12 Hz and 24 Hz.

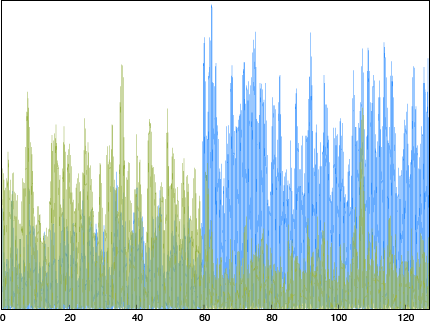

So, figuring out which pattern the driver is looking at is a matter of detecting the dominant frequency. The graph below shows the magnitude of two distinct frequencies plotted against time. We start with the driver looking at the pattern corresponding to the green frequency. About a minute into the experiment (the 60 seconds mark in the graph below), the subject switches over to the other pattern. You can see the corresponding change in magnitude, where the frequency shown in blue becomes dominant.

Things get slightly trickier when working in real-time with multiple frequencies and short time-windows. However, the general principle remains the same.

Aside from SSVEP, another common method is to use the P300 evoked response with the oddball paradigm.

The Implementation

The original prototyping for Myelin was done using Matlab and Weka.

The final implementation shown in the video is comprised of the following:

Hardware

- iRobot Create — a programmable version to the Roomba

- g.USBAmp — for EEG acquisition

- Gamma cap — for electrodes

Software

- Real-time classification engine — C++

- Reverse engineered acquisition driver for the biosignal amplifier — C++, libusb

- Stimuli presentation UI — C++, OpenGL, Qt

- RPC daemon for remotely controlling the robot — Python